In September 2025, Education in Motion (EiM) launched its Education Advisory Board (EAB), bringing together leading voices from across higher education, policy-making and global research. As the group prepares for the Board's inaugural summit at Dulwich College (Singapore) on 27 April, a distinctive student-led thought leadership initiative is happening across the family of schools.

Through a series of in-depth interviews with EAB members, students collaborate across our sister schools, engaging directly with distinguished global experts and exploring cross-disciplinary perspectives spanning leadership, research, education, and the skills needed to thrive in an ever-evolving future.

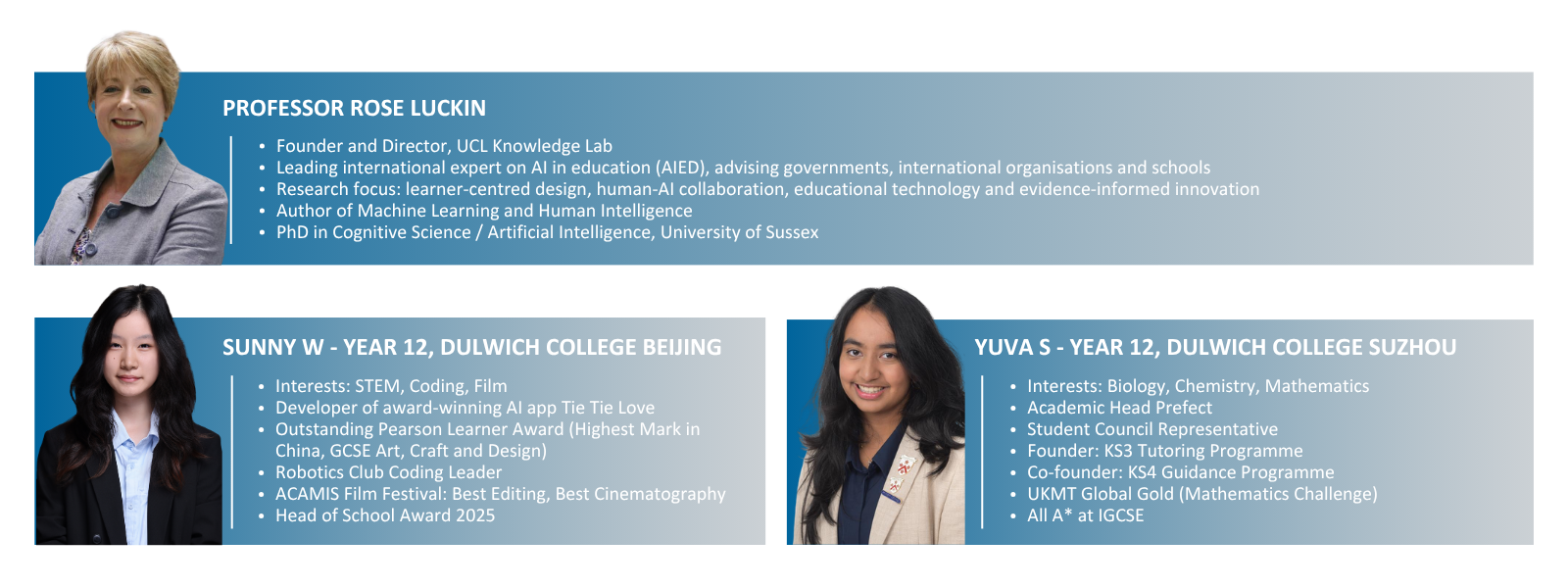

The first episode features Professor Rose Luckin from Learner-Centred Design at University College London and a leading authority on artificial intelligence in education. Through questions led by students from Dulwich College Beijing and Dulwich College Suzhou, the conversation explores how AI is reshaping learning, creativity and the purpose of education itself.

Personal Pathways and Intellectual Discipline

Sunny and Yuva began by examining the personal and intellectual foundations behind Professor Luckin’s work, exploring how creativity, constraint and curiosity shape both scientific inquiry and human learning.

Sunny W: What first drew you to the intersection of education and technology? Was there a particular moment that set you on this path?

I came to AI later than many. After completing my A-levels and raising two young children, I decided to go to university and initially considered economics. While reading a prospectus, I found this subject called ‘artificial intelligence’ and was recommended the book Gödel, Escher, Bach by Douglas Hofstadter. That book asked questions I found deeply compelling and sparked my interest.

I applied to study computer science and AI and never looked back. What drew me in was its interdisciplinary nature—combining programming and theoretical computer science with philosophy, psychology and linguistics. That moment defined my path.

Yuva S: What personal challenges did you face on your journey, and how did you overcome them?

It wasn't straightforward, doing a degree with two children under five. I had limited time, so I concentrated only on what was necessary to succeed, which narrowed my undergraduate experience.

I was also often the only woman on my course. Initially, I had to prove myself, but once my fellow students realised that I wasn't a token woman, took it seriously and that I could program, we all got on immensely well.

Balancing family and study was challenging, but it also provided perspective and it relieved the stress of my studies.

Later, working in male-dominated environments brought both challenges and rewards. But it's not always easy to be the minority, whatever that might look like, and it also relies on your colleagues having an open mind and being willing to listen.

Sunny: How important do you think creativity will be in the future, and how can students apply it and their originality beyond the arts?

Creativity and originality will remain essential, and perhaps become even more important. However, we may need to rethink how we define and evaluate it, focusing more on forms of creativity that are uniquely human.

AI and human intelligence are fundamentally different, and creativity may be one of the key ways we distinguish between them. This creates challenges for education, particularly in how we assess creativity and originality.

We need to reconsider evaluation methods so they better recognise human creativity in ways AI cannot replicate. That is a really important subject to tackle, because it is going to be part of what differentiates us.

Sunny: Following up, how should we transform the traditional idea of creativity into something more useful and valuable, and what aspects should we focus on to distinguish human creativity from AI?

I have a couple of thoughts, but I don't have all the answers. We should place greater emphasis on the creative process—what is learned, revealed and developed through it—rather than focusing only on the final product.

Emotional engagement is also important: both the creator’s immersion and the audience’s response. Creativity is closely tied to human experience and meaning. By evaluating process, impact and emotional resonance, we can better recognise and emphasise that distinctively human creativity.

Yuva: What is one quality that the best researchers and students share and why is it so important in your field?

You have to be furiously curious to be a good researcher. Leave your ego, your expectations and biases at the door and be truly curious if you really want to understand what is going on.

You also have to stay a good learner. I still learn new things all the time. In order to do that, I have to not be satisfied with the superficial answer. I have to always burrow underneath and actually grasp what's going on.

I want to understand the real story that we've got here in all its nuances, good and bad. That also means reporting what the research shows, even if results are unexpected or disappointing, rather than shaping findings to fit your hopes or the people funding your research project.

AI and the Future of Learning

The discussion then shifted to a bigger picture, as Sunny and Yuva explored how AI is changing education—its potential to personalise learning, the risk of increasing inequality, and how students should think about using it responsibly.

Sunny: What are the most exciting and meaningful applications of AI in classrooms today?

I frame this through the idea of the ‘exceptional learner’—someone who understands how they learn and continuously improves. We cannot predict exactly what subjects or skills are going to be the most important in the future, and where this AI narrative is going.

AI can support this by analysing learning behaviours and helping both students and teachers gain deeper insights. It enables more personalised learning and helps teachers better support each student.

My hope is that AI can help bring a new era of intelligent human behaviour. These tools should not tempt us to take the easy route, but to achieve more. In this sense, AI will both be the catalyst and the tool for that future: it is already pushing us to increase the sophistication of our own human intelligence, while also becoming the tool that enables us to do so.

Yuva: How do we encourage people to see AI as a tool for growth rather than a shortcut?

We have to help people realise this is not the route to an easier life—it’s a route to a more rewarding one, where we can achieve a lot more.

But that’s challenging, because much of the narrative from the media and large technology companies suggests the opposite—that AI is here to make life easier. So we are battling against that.

Part of the answer lies in something very human: the sheer satisfaction of real achievement. You’ll recognise that feeling—when you accomplish something you didn’t think you could. We need to tap into that feeling and help people see that this is the bit you want, not the easier life.

I remember as a student being told I had to build a compiler, and I didn’t even know what one was. It was the steepest learning curve I’ve ever been through—late nights, constant effort—but when I actually built it and it ran, I can still feel that huge satisfaction. That’s what we want everyone to value.

So every time we use these tools, we need to ask ourselves: Is this making me smarter? Is this helping me to do more, or am I just taking the easy ride? That’s how we build more sophisticated thinking.

At the same time, we mustn’t shy away from the fact that these are commercial technologies. Companies need to make their products ‘sticky’, and sometimes that means conveying the message that this is about making your life easier—or even being overly agreeable. You have to watch out for that: the AI will tell you your cooked salmon is ‘lovely’ even though it can’t taste anything, just to keep you engaged. It’s charming, but a little insidious if you’re not attuned to it.

Yuva: How do you think AI could help break down some of the deep-rooted inequalities in education, like gender, income, or disabilities?

For much of my career as an academic, I saw AI’s primary benefit as creating hyper-personalised educational experiences at a fraction of the cost, reaching students who have no teachers or are otherwise left out. I do still believe AI has that potential.

What’s becoming clear, though, is that we have to work very hard to make that happen, because right now the opposite is happening. AI tools are actually bringing about greater inequality, and that is a real challenge.

Inequality shows up in many ways—from the different ways men and women use the tools, to how having more money allows access to better AI and gives a step up compared to peers who can’t afford it.

A report from the Sutton Trust here in the UK last year showed that the data AI models are trained on contains biases. As a result, unequal outcomes are baked in and can be reproduced uncritically unless we actively address them.

AI could help bring about greater equality, but it won’t happen on its own. As so often is the case with technology, the results depend on us: how we use it, and whether we make sure there’s equal access. It’s a profoundly challenging situation, but also an opportunity. At the moment, the challenge is outrunning the opportunity. We need to switch that round.

Sunny: Many people—like my parents and grandparents—aren’t as eager to embrace AI, and we sometimes disagree on new ideas. How do you navigate that, especially when it challenges traditional views of learning and knowledge?

I had a much gentler introduction to AI than a lot of people are having right now. So, I was able to embrace its potential benefits when the risks were not as profound and visible as they are now.

Today, the systems have become so powerful and so fast, that the pace and power of AI make it more challenging, especially for those encountering it for the first time. It can feel unfamiliar and overwhelming. I certainly also worry about AI, and do not think everything happening with it is positive.

I can sympathise with people who perhaps are just coming to AI for the first time. Because it sort of feels like magic, doesn't it? You can just type this question into a little box and immediately get all this information back. It can create pictures, movies, essays, letters, plans, whatever it is. It must feel very scary at times.

We are seeing an increasing amount of study evidence that people who have learned to use AI tools don’t fully get enabled by that. You need to understand the behaviours that AI is capable of in order to really get the best of these tools.

By being transparent about these powerful technologies and recognising the risks, while also showing how you can reduce those risks, might make it easier for people to engage and see the potential benefits. But I understand that it is a real challenge, because it must seem very alien.

Yuva: What is the biggest ethical challenge with AI integration in education?

There are many, but I’m going to pick one we often ignore. That is the purpose of the AI. You can have the same technology using the same data and the same infrastructure. But if it is used in one way, it may be ethical. And if it's used in another, it may be unethical.

We need to really think very carefully about the overarching purpose of AI, and find one that fits within our definition of what is ethical. After that, we can unpack all of the ethical complexities around data processing algorithms, etc. That's a good place to start, and it often gets overlooked. So I would say, let's start with that.

Looking Ahead

The upcoming Education Advisory Board summit at Dulwich College (Singapore) will bring together global experts and student representatives to continue discussions like these in a live, collaborative setting.

This series demonstrates that our students are engaging in rigorous, high-level dialogue that reflects the values our schools instil: curiosity, critical thinking and responsibility. Through their conversations with Professor Luckin, they actively shape the discussion around AI and education, modelling the type of thoughtful, evidence-informed engagement that will be crucial in the future. Their capacity to question, reflect and contribute meaningfully demonstrates that our students are ready to lead, not just learn.

Stay tuned for the next episode, where students will continue to bring these values and insights to life.